| РУССКИЙ |

|

Advanced Frame Rate Converter (AFRC)

MSU Graphics & Media Lab (Video Group)

Algorithm, ideas: Dr. Dmitriy Vatolin

Algorithm, implementation: Sergey Grishin

FRC (Frame Rate Conversion) algorithms are used in compression, video format conversion, quality enhancement, stereo vision, etc. The most popular application is format conversion. This is the case when FRC is used in order to convert the frame rate of video stream. It is needed for example in order to playback 50Hz video sequence using TV set with 100Hz frame rate. FRC makes the motion of objects smoother and therefore more pleasant for eyes. It allows to slow down the playback speed thus making the objects' movements more evident.

Pic.1 Basic scheme of FRC

FRC algorithm increases the total number of frames in the video sequence. This is performed by inserting new frames (interpolated frames) between each pair of neighbor frames of original video sequence (see pic.1). The number of interpolated frames between each pair of original frames is defined by the interpolation factor. Interpolation factor is a user defined parameter and can be equal to any positive integer number.

Main advantage of developed algorithm is using of several quality enhancement techniques such as adaptive artifact masking, black stripe processing and occlusion tracking:

Examples

This section contains performance results of developed algorithm and its comparison with methods of other companies.

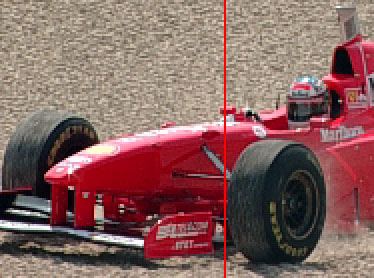

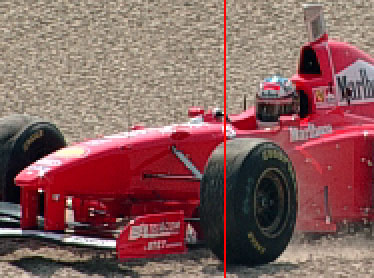

First example (pic. 2-4) demonstrates result obtained using 'schumacher' test video sequence. Interpolated frame (see pic. 4) is calculated by developed algorithm using two reference frames (pic. 2,3). Presented interpolated frame located in the centre position in time domain between reference frames.

Pic.2 Previous reference frame

Pic.3 Next reference frame

Pic.4 Interpolated frame

Quality comparison of the developed method and other companies' methods is shown at the pictures below.

First example shows performance result for test video sequence 'stefan'. Interpolated frames are obtained during

conversion of input video stream with interpolation factor equaling 2. The number of interpolated frame in output video sequence is 339.

Pic.5 Previous reference frame

Pic.6 Next reference frame

Pic.7 Retimer result

Pic.8 Motion Perfect result

Pic.9 Twixtor result

Pic.10 AFRC result

Next example shows performance result for test video sequence 'foreman'. Interpolated frames are obtained during

x1 conversion (sequence is firstly decimated with factor 2 and decimated frames are then interpolated) of input video stream. The number of interpolated frame in output video sequence is 171.

Pic.11 Previous reference frame

Pic.12 Next reference frame

Pic.13 Retimer result

Pic.14 Motion Perfect result

Pic.15 Twixtor result

Pic.16 AFRC result

Next diagram (see pic. 17) demonstrates the results of objective comparison. The objective quality of processed sequences for various methods was measured using Y-PSNR. During PSNR calculation only interpolated frames had been used. In order to do that original video sequences are first decimated with factor 2 and then decimated frames are recovered using FRC. After that interpolated frames are compared with frames from original video sequences using Y-PSNR metric.

Vertical axis is marked with average Y-PSNR values for each sequence, horizontal one - by test sequences' names. As it can be clearly seen the developed algorithm (AFRC) shows the best objective quality result.

Pic. 17 Objective comparison result

Publications

- D.Vatolin, S.Grishin, "N-times Video Frame-rate Up-conversion Algorithm based on Pixel Motion Compensation with Occlusions Processing", "Graphicon", International Conference on Computer Graphics & Vision, conference, July 2006, pp. 112-119. (Russian)

- D.Vatolin, S.Grishin, "Video Frame Rate Conversion Method Based on Compensated Frames Interpolation", "New Information Technologies in Automated Systems", Seminar at M.V.Keldysh Institute of Applied Mathematics, March 2006, pp. 32-46. (Russian)

Download

For commercial license of this filter please contact us via

|

Another resources

Video resources:

Server size: 8069 files, 1215Mb (Server statistics)

Project updated by

Server Team and

MSU Video Group

Project sponsored by YUVsoft Corp.

Project supported by MSU Graphics & Media Lab